The crossroads of AI, and why we're not talking about it nearly enough

The biggest leap since the dawn of humanity

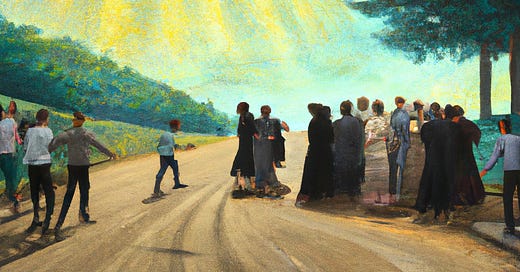

15 years ago I was walking back from a music festival in a local town. There was a group of about 10 of us, and we didn’t know the area well, we arrived at a crossroads and started arguing about the way. As we bickered a friend of mine in the year above pulled out his iPhone and showed us, clear as day, not only the correct direction but how long it would take us to arrive.

I didn’t really grasp technology at this point, but this was one of those moments when you know something about the world had changed. The power to put such a complex computer into the palm of your hands, connected to the internet, offered limitless flexibility.

I felt this sense of awe for the first time since about 6 months ago - when I first used chatGPT.

But this article, isn’t about ChatGPT. There’s enough discourse on that already. For the early adopters, it’s revolutionised work. ChatGPT does half our coding, the bulk of our writing, acts as a project manager and strategic planner. It’s our most useful employee, and it costs $20/month.

No, this article is about where AI is headed, why we are woefully unprepared for its implications and why, even though AI is the two letter acronym on everyones’s lips, its still not being talked about anywhere near enough. Today, we’re going to talk about how a sufficiently advanced AI will change civilsation more than any other technology is history

AI Progress

It’s fairly easy to extrapolate what a linear progression of ChatGPT would look like. White collar work such as accountancy, consultancy, programming and law gradually get more leveraged by AI – one accountant can now do the work of 10. Jobs either shift, as they did in the computer revolution to ones that don’t even exist yet, or they become replaced completely.

But it’s unlikely the progression of AI will be linear. History has told us that disruptive technologies like this never are. Productivity growth in the 50 years following the industrial revolution was order of magnitude bigger than the 300,000 years of human civilisation before. Smartphone adoption went from 2% to 81% in the 10 years since 2005. What would this kind of growth mean for AI?

Superintelligence

When most people talk about the ‘end game’ of AI. They talk about the first creation of a superintelligent AI. This is not a new concern, as early as 1965 I.J Good, a brilliant early researcher in the field had this to say about a superintelligent machine:

"Let an ultraintelligent machine be defined as a machine that can far surpass all the intellectual activities of any man however clever. Since the design of machines is one of these intellectual activities, an ultraintelligent machine could design even better machines; there would then unquestionably be an 'intelligence explosion,' and the intelligence of man would be left far behind. Thus the first ultraintelligent machine is the last invention that man need ever make provided that the machine is docile enough to tell us how to keep it under control."

The thesis is quite simple, as soon as an AI is created that has around the same general intelligence as a man, that machine’s intelligence will, in a matter of seconds completely eclipse that of a human. The intelligent machine has access to computation power orders of magnitude greater than a single brain, and practically unlimited memory through hard drives. It will utilise these resources to multiply itself a thousandfold and pool its intelligence together into a single, superintelligent hive mind. This cycle rinses and repeats till something is created that has intelligence we can’t even comprehend.

When most people think of a superintelligent computer, they tend to anthropomorphise it. They think of a very intelligent, nerdy human. Something clever, but that can fundamentally be understood. With an intelligence gap so large this is unlikely to be the case. The intelligence of a superintelligence to us would be more akin to the difference between our intelligence and an ant.

The singularity

The moment at which this intelligence is created is called the singularity. The hypothetical future point in time at which technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization. Most experts believe that only a single AI can exist in the singularity, because the intelligence explosion is so rapid that even an advantage of a few hours would result in an intelligence far superior.

I first came across the idea of the singularity in Nick Bostrom’s book ‘Superintelligence’ I remember it being one of the more disturbing books I’ve ever read. The saving grace was at the time, it felt incredibly fanciful. AI at that point was limited to very narrow applications, Google Search, Algorithm pricing on apps like Uber, and as a way to manage the enemy on strategic video games. It seemed unlikely that this would impact my lifetime, we were just too far off from creating something that had intelligence that could ever be compared to the broad intelligence of a human.

I’m no expert on AI, but it certainly seems like we aren’t far off creating human like intelligence. While many would argue LLMs (large language models like GPT) aren’t capable of reasoning, they are just very good at pattern recognition. It certainly doesn't feel that way when you are using GPT-4. It feels like you are having an intelligent, nuanced conversation with an expert in whatever role you have assigned to it.

Perhaps it really does just appear more intelligent than it actually is, but perhaps not. After all, our understanding of how the human brain works is comically limited, we still have no idea what consciousness is and only have a very rough understanding of how abstract thought and complex reasoning take place. Maybe it is just simply very advanced pattern recognition.

My point here is that the approaching of the singularity does not feel like Sci-Fi anymore. It’s an issue we should take seriously, because once the singularity is reached, there is no going back. Civilisation will change forever, and it’s up to us to ensure the superintelligence is programmed with the correct motivation.

Motivation

Another factor about superintelligence that we don’t know (maybe you are following the theme here) is what motivation it will have. Humans get their motivation from chasing pleasure (the carrot) and avoiding pain (the stick). I write this article because I get pleasure from the act of writing, I see the value in sharing my thoughts with an audience, but also because I fear not living up to societies expectations.

There is no reason to think an AI would have such hopes and fears, or that it would ‘feel’ in the sense that human do. If I send this email to 13,000 people and 6000 read it, dopamine rushes to my brain, and I feel good. I only 4000 read it, I don’t get this rush and feel very normal. These are biological responses, and while there is a chance a superintelligence could be made from biological means (imagine some huge brain grown in a lab) it’s looking more and more likely that this intelligence will run on transistors, not neurons.

Because of this lack of inherent motivation, motivation must be programmed. When I use ChatGPT it is programmed, using reinforcement learning, to give me a useful answer to my question, and most of the time it works pretty well. But even if it didn’t it wouldn’t be a big issue, it would just be another software product noone would use. But GPT is not a superintelligent AI, if it was, the issue of incorrect motivation programming would be much more extreme.

To understand the motivation problem in a superintelligent AI we can use the famous paperclip factory analogy. Imagine you create a superintelligent AI to build paper clips. That AI might start by taking an existing factory and optimising it to create more paperclips. It may then build robots to harvest the raw materials to make more factories and process more paperclips. Within a few days it has created hundreds of paperclip factories across the world, tearing up the Amazon, and the Ice caps in the process. The AI then looks at human civilisation. It just sees a collection of resources to be turned into paperclips. It creates an army of drones and tanks to destroy humanity and flatten our infrastructure to be turned into paperclip factories. It then turns its focus to the cosmos, constructing thousands of self-replicating Von-Neuman probes, which promptly turn the whole reachable universe into paperclip factories.

This example might seem a little insane, and it is, but it does highlight the problem of a superintelligent AI encoded with the wrong motivations, and it’s something people in the AI research field invest a tonne of time in trying to understand. How do we align the incentives of humans with that of AI?

But even in the case of perfect alignment with humanity, a superintelligent AI will have consequences we can’t even begin to imagine. Let’s turn our heads to how life could look if an AI was programmed with some pretty uncontroversial desires society might have of it.

Wealth creation

I used to think wealth creation was a zero-sum game. If I make money, someone has to lose money. If I’m working as a pot washer at a restaurant, the restaurant is losing wealth by paying me, and they in turn are gaining wealth from customers, who are losing wealth by paying £15 for 20p of pizza dough and a few very average few toppings (apart from the crispy duck, that was banging).

Of course, this is not how wealth works, wealth is literally created from nothing. You can take a broken car, fix it up and sell it for more. No wealth has been lost here, the value of the car has simply increased. The internet combined with code massively amplifies wealth creation. I can create a piece of software with a few hours of focused work and sell it forever without ever touching it again.

Up until now though, wealth creation has had to involve some kind of human ingenuity, whether that’s the know-how to fix up a car, or the creative insight to create a product that fills a market need. In these last few weeks we are starting to see the first businesses being built where everything from idea to execution is driven by AI. Jackson Fall gave GPT4 $100 and has already turned it into thousands, following is instructions to create a site with content and products in the sustainable living niche. Most conservative estimates reckon AI will affect 1 in 5 jobs in the next few years, but again this is thinking from the paradime of linear AI growth without superintelligence.

With a superintelligence there would be no need for anyone to work. Wealth would be created so quickly and efficiently that we’d be living, at least materially, like kings. While this would solve a tonne of problems, there’s a load it would leave open, people would need to find other ways that work to find meaning.

Healthcare

AI is already having a tonne of impact on healthcare. But for the sake of argument, let’s look at the biggest impact it could have.

In the Global North, people predominately die of age. Ageing increases risk of heart disease, cancers and nearly every other life-threatening disease. Modern medicine can cure many of these diseases, but we have no way to stop the underlying problem of ageing.

There are no fundamental laws saying people must age. The laws of thermodynamics show we can’t congure matter out of nowhere, and the special theory of relativity shows we can’t travel faster than the speed of light, but we have no fundamental theories to suggest we have to age. Ageing is just the process of cells becoming damaged through repeated replication.

While as a species we are a long way from solving ageing (even if it’s actually something we would want to solve, I personally can’t imagine a life more boring than one that goes on forever) but a superintelligence would likely make quick work of the problem. The moment a superintelligent agent is created people it’s likely people will at least have the optionality to stop death.

Control

Infinite money and unimaginably good healthcare might not seem like bad things at all, and most would agree that freeing up the work that humans don’t want to do, and providing solutions to complex problems, would be a great benefit of having a superintelligent AI but, as we explored earlier, it’s difficult to ensure these are the results we’ll achieve because a superintelligent AI is something so far beyond our comprehension (remember the ant analogy).

That’s why last week, Yuval Hurari (Sapiens, good chap), Elon Musk (Telsa, shit bloke) and thousands of other founders wrote an open letter from the Future of Life Institute:

We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4. This pause should be public and verifiable, and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.

The letter argues for the pause because “AI labs are locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control.”

It’s a nice intititive, but can you really stop technology? Also would the US government have any incentive to stop OpenAI, an american company, from progressing?

The AI Arms Race

The reality is that right now we are locked in arms race. A superintelligent agent would be infinitely more powerful than any nuclear bomb. Such an agent could cripple a countries economy, wipe out its currency, disable all infrastructure and have its citizens on house arrest in a matter of hours. Is America really going to throttle OpenAI, take a back-seat and wait for the Chinese to create their own advanced AI models? It’s really not that dissimilar from the Manhatten Project. Everyone involved knows its going to be a shitshow, everyone knows the weapon created is going to be something so dire it should never have had a place in human society, but the game theory requires it to get created anyway.

Conclusion

In conclusion, the rapid advancements in artificial intelligence, and the looming prospect of a superintelligent AI, are forces that can’t be understated. We are at a juncture in human history where the potential for exponential growth in wealth, healthcare, and efficiency is being matched by an equal potential for catastrophic consequences if we fail to align AI's motivation with our own.

10 years ago when I was lost at a crossroads, technology led me in the right direction. Today we must lead the technology.

WAGMI & I Love You

Tom

"With a superintelligence there would be no need for anyone to work. Wealth would be created so quickly and efficiently that we’d be living, at least materially, like kings. While this would solve a tonne of problems, there’s a load it would leave open, people would need to find other ways that work to find meaning."

This kind of thinking is short-sighted and ignores the fact that the vast majority of people around the world do not do work that can be easily automated and replaced by AI (or to put it another way, the tech world =/= The World). Physical labor, conservation + environmental work, and services aren't going to be replaced by AI + robotics anytime soon (if ever), at least not without wholesale changes to the way we structure our physical environments. If we look at futurisms where basic needs are met by replicators and increasingly powerful computers fulfill most of our tasks (eg. Star Trek), what we find within them are modes of organization and values that prioritize human ingenuity, physical labor, and exploration, even when it's much more rational to send an unmanned probe to Ceti Alpha V than the Enterprise.

AI might very well eliminate a lot of white-collar 'bullshit jobs', or at the very least increase productivity levels to the point where revenue growth is completely decoupled from headcount. It will probably also clear out a lot of mediocre content creators, exacerbating the pareto distribution that already exists on their favorite platforms. I suspect this is where a lot of the paranoia around AI eating the world comes from. But the world is made of atoms, not bits, and at some point one has to get into the business of moving atoms in order to create wealth and have an impact.